I discussed in my previous post on workload identity and dived into how it works in AKS (Azure Kubernetes Service). In this post I will continue the topic with AWS as the example. From the perspective of CSP, we consider any running process on the cloud resource as workload. Therefore, I’ll start with control plan and node identities. From the perspective of a Kubernetes platform, the term workload mostly refers to applications running in Pods. So later in this article I’ll distinguish two mechanisms for Pod Identity: IRSA and EKS Pod Identity.

EKS Control Plane and Node Identity

AWS directly associate an IAM role with EKS control plane and an IAM role with each node group. We don’t need an extra step of assigning a “managed identity” (as in Azure) to a cluster or to a node group ( and then bind a role to the identity). You can find this pattern from Terraform code. Each aws_eks_node_group resource has a node_role_arn attribute to link to its IAM role, and a cluster_name attribute to link to the cluster. Each aws_eks_cluster resource has a role_arn attribute for cluster’s permission.

The cluster’s IAM role is usually bound to managed policies like AmazonEKSVPCResourceController and AmazonEKSClusterPolicy. The IAM role that is assigned to the node group is the exact IAM role of the instance profile of each node. The kubelet process on the nodes are the main users of this role and the permission should not be broader than what it needs to do. This role usually have a few managed policies such as AmazonEKSWorkerNodePolicy, AmazonEKS_CNI_Policy, AmazonSSMManagedInstanceCore and AmazonEC2ContainerRegistryReadOnly.

The node role applies to self-managed node and managed node. When using Fargate to provide computing capacity, each Fargate profile will use its own IAM role, to connect to the cluster and pull container images. This IAM role is known as Pod Execution Role. For a private cluster, the place to run the command would be a bastion host with connectivity to the cluster’s API endpoint. Refer to this post about the connectivity to private cluster.

IAM Role for Service Account (IRSA)

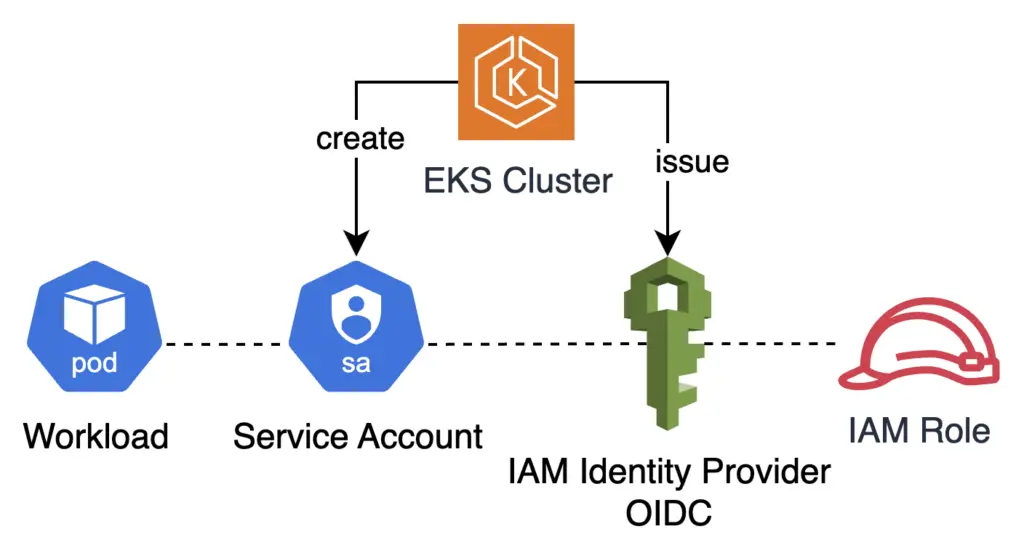

When AWS launched EKS in 2018, Kiam was a popular open-source project to grant Pods access to AWS resources. In 2019, AWS introduced the official mechanism, IRSA (IAM Role for Service Account). IRSA ties a Kubernetes identity (in the form of Service Account) to an IAM role in AWS. IAM allows creation of web identity based on OIDC. EKS can act as an OIDC issuer.

This requires a few points of configurations, via the cluster API and via cloud the endpoint. The eksctl utility makes it simple with two commands:

$ eksctl utils associate-iam-oidc-provider \

--cluster $CLUSTER_NAME \

--approve

$ eksctl create iamserviceaccount \

--cluster=$CLUSTER_NAME \

--namespace=kube-system \

--name=aws-load-balancer-controller \

--role-name AmazonEKSLoadBalancerControllerRole \

--attach-policy-arn=arn:aws:iam::112233445566:policy/AWSLoadBalancerControllerIAMPolicy \

--approve

The first command creates an OIDC web identity integrated with the EKS cluster, and the second creates a Service Account in Kubernetes and links it to the identity, and assign an IAM policy. These two commands must run under certain conditions. The AWS CLI identity for first command requires the the permission to add OIDC provider. The second needs the permission to create an IAM role. In addition, it requires kube API access to the cluster. So the command needs to run from an environment that can access both the cluster’s API and AWS API.

The IAM identity provider is somewhat similar to a managed identity with OIDC federated credential in Azure. However, unlike managed identity, here in AWS we cannot create the OIDC identity until after the cluster creation. In other words, the OIDC web identity’s lifecycle does not decouple with the cluster lifecycle. We have to create a new web identity every time we create a new EKS cluster. In large organizations, the permission to create a new web identity is highly restricted.

EKS Pod Identity

There are a few other limitations with IRSA. As this blog post suggests:

Further, cluster administrators have to update the IAM role trust policy each time the role is used in a new cluster during scenarios like blue-green upgrades or failover testing. Additionally, as customers grow their EKS cluster footprint, due to the per cluster OIDC provider requirement in IRSA, customers run into the per account OIDC provider limit. Similarly, as they scale the number of clusters or Kubernetes namespaces in which an IAM role is used, they run into IAM trust policy size limit, which makes them duplicate the IAM roles to overcome the trust policy size limit.

AWS brings the new mechanism “EKS Pod Identity” at reInvent 2023. In this mechanism, user can hook up an IAM role directly to a Kubernetes service account, without having to resort to a web identity and OIDC integration. Users just need to create a Pod Identity Association, using the CreatePodIdentityAssociation API, with the following parameters:

- Cluster name

- Namespace

- ARN of the IAM role

- serviceAccount

Both AWS CLI and ekscli already support the CreatePodIdentityAssociation API. Before creating a Pod Identity Association, we need to install the add-on “Amazon EKS Pod Identity Agent”, and ensure that the node roles have the permission. That is because the agent needs to use AssumeRoleForPodIdentity API. We also need an IAM role, with the trust policy principal being “pods.eks.amazonaws.com” and our own choice of resource tags as condition. Note that another implicit prerequisite is that the programming running in the Pod use a newer version of AWS SDK to access cloud resource.

This blog post has good details, including a diagram and a walk-through.

Comparison

Both EKS Pod Identity and IRSA are here to stay. I’m afraid this is going to create confusions. I put the following table for their comparision:

| IRSA | EKS Pod Identity | |

|---|---|---|

| Pros | – in use since 2019 – support EKS, EKS-A, ROSA – support all EKS versions | – support role session tags – no dependency on OIDC identity provider – create an IAM role once for all clusters. the role can be created before cluster – cross account access through resource policies and chained AssumeRole operation |

| Cons | – Cannot create OIDC identity provider, until the cluster is ready – One OIDC provider per cluster, with the risk of hitting quota – Trust policy sprawl as more clusters are created | – the program has to use newer version of SDK. – ony support EKS – Pod Identity Agent (DaemonSet) can’t run on Fargate |

The blog post also contains a long table for their comparison. In the near future, I will have to check the SDK version of a workload in order to assess whether EKS Pod Identity will function. This is a restriction because it depends upon software builder disclosing the SDK version used. The EKS cluster also needs to host daemonSet on a node agent. On the other hand, go with IRSA if portability between EKS and EKS-A and ROSA is of concern, because the IAM service principal pods.eks.amazonaws.com is dedicated to EKS.

The blog post also gives the migration step as follows:

- Ensure EKS cluster is above 1.24, and install the add-on for EKS pod identity agent.

- Ensure the SDK running in pod meets the version requirement.

- Update the IAM role’s trust policy with the new principal “pods.eks.amazonaws.com”

So the EKS Pod Identity mechanism still requires an IAM role. It does not required an OIDC identity. The service account connects to IAM role via an agent on the node.

Summary

A good design concerns not only functionality, but also streamlined configuration experience. EKS Pod Identity is a great improvement over IRSA heading the right direction. It just came out two months ago so still too early to adopt, especially without knowing the workload details. For now I tend to use pod identity as a backup mechanism when IRSA isn’t available for some reason. However, I recommend starting to introduce the Pod Identity mechanism for all new EKS clusters and new workloads.